OMPrisma: Spatial Sound Synthesis in OpenMusic

| Participants: |

Marlon Schumacher (author) Sean Ferguson (supervisor) Jean Bresson (supervisor) |

|

|---|---|---|

| Funding: |

NSERC/CCA Spatialization FQRNT CIRMMT (travel/exchange grants) |

|

| License: | LGPL | |

| Time Period: | 2009–present (ongoing) |

Overview

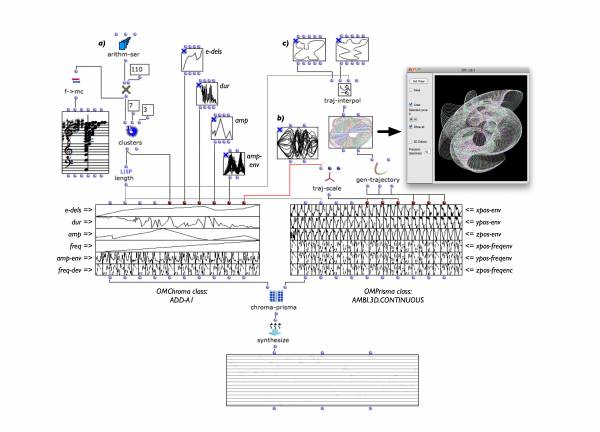

OMPrisma is a library for spatial sound synthesis in the computer-aided composition environment OpenMusic.

In addition to working with pre-existing sound sources (i.e. sound files) it permits the synthesis of sounds with complex spatial morphologies controlled by processes developed in OpenMusic in relation to other sound synthesis parameters and to the symbolic data of a compositional framework.

OMPrisma's system architecture separates authoring of spatial sound scenes from rendering and reproduction (see the ISASA2010 paper). This approach provides an abstraction layer which allows the rendering of alternative realizations of the same spatial sound scene description using different spatialization techniques and loudspeaker arrangements.

Version 2.0

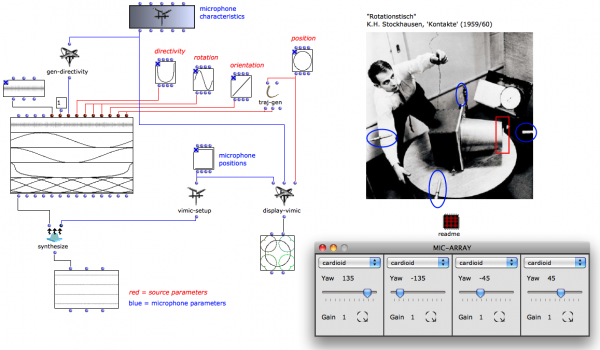

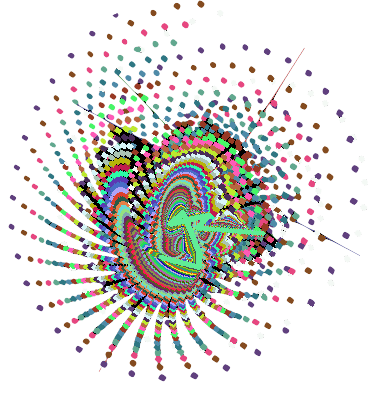

While OMPrisma 1.0 focused on compositional control of sweetspot-based spatialization techniques (such as VBAP or Ambisonics), OMPrisma 2.0 introduces new classes implementing spatialization techniques for non-centralized audiences (such as dbap, babo or ViMiC). This allows for reproduction setups with arbitrary loudspeakers placements, e.g. on stage, between the audience, for installations contexts, etc. The screenshot below, for example, shows a patch in which an OMPrisma class for Virtual Microphone Control (ViMiC) is used to simulate the spatialization technique employed in K.H. Stockhausen's “Kontakte” (1959/1960); using a rotational table with a mounted directional speaker (sound source) and four stationary microphones placed around it. A detailed description of this technique can be found in: Braasch, J., Peters, N., and Valente, D. L. (2008). A loudspeaker-based projection technique for spatial music applications using Virtual Microphone Control. Computer Music Journal, 32(3):55 – 71.

Class library of spatial sound renderers

OMPrisma currently implements the following classes for spatial sound rendering.

All classes render perceptual distance cues for attenuation, air-absorption and doppler effect.

| OMPrisma Class | Description | 2D/3D | sweet-spot | local/global | ICLDs | ICTDs | Room model |

|---|---|---|---|---|---|---|---|

| ambi | higher-order ambisonics | 3D | Y | global | X | ||

| babo | ball-in-a-box | 3D | N | global | X | X | physical (resonator) |

| dbap | distance-based amplitude panning | 3D | N | global | X | ||

| panning | pan-pot (transfer functions) | 2D | Y | local | X | ||

| rvbap | vector-base amplitude panning | 3D | Y | hybrid | X | signal (fdn) | |

| spat | ambisonics | 3D | Y | global | X | geometric (source-image) | |

| vbap | vector-base amplitude panning | 3D | Y | local | X | ||

| vimic | virtual microphone control | 3D | N | global | X | X |

Reproduction & Auralization

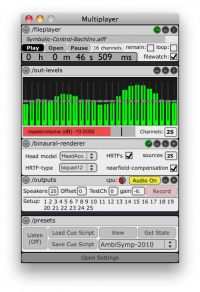

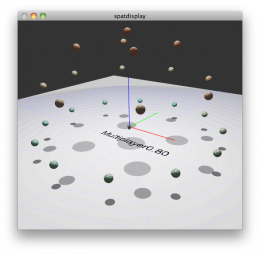

In addition to the authoring and rendering of spatial sound scenes, a third component of the OMPrisma framework is dedicated to aspects of reproduction (decoding, diffusion) – which often requires tweaking and adaptation for a given venue. For flexible adjustments in realtime we have developed a MaxMSP-based standalone application using the Jamoma framework: The “MultiPlayer” allows processing (rotation, transformation) and decoding of B-format/Higher-Order Ambisonics files, provides graphical tools for configuration of loudspeaker-arrangements (with automatic or manual compensation of time-delay and amplitude differences) and allows binaural reproduction/simulation via HRTF convolution with virtual loudspeaker positions.

Publications

- Marlon Schumacher and Jean Bresson. "Compositional Control of Periphonic Sound Spatialization." 2nd International Symposium on Ambisonics and Spherical Acoustics, Paris, France, 2010.

- Jean Bresson, Carlos Agon, and Marlon Schumacher. "Représentation des données de contrôle pour la spatialisation dans OpenMusic." Actes des Journées d'Informatique Musicale (JIM), Rennes, France, 2010.

- Marlon Schumacher and Jean Bresson. "Spatial Sound Synthesis in Computer-Aided Composition." (Version inc. Errata) Organised Sound, 15(3): 271-289, 2010. doi: 10.1017/S1355771810000300

- Jean Bresson and Marlon Schumacher. "Representation and Interchange of Sound Spatialization Data for Compositional applications." Proc. of the International Computer Music Conference (ICMC), Huddersfield, UK, 2011.

Artistic Works

OMPrisma has been used for the composition and realization of a number of works, most notably:

- “Continuous snapshots” by Sébastien Gaxie (2013). For piano and electronics.

Commissioned by the Ircam ManiFeste Festival 2013. Premiered by David Lively on June 6th, 2013 at Centre Pompidou, Paris.- Video Interview on dailymotion.

- “Codex XIII” by Richard Barrett (2013). Improvisational structure for large ensemble and electronics. Commissioned by the Symposium Composing Spaces 2013, Royal Conservatory of Den Haag, Netherlands. Premiered April 12, 2013.

- “Spielraum” by Marlon Schumacher (2013). For Violin, Cello, Digital Musical Instruments and Live Electronics. Commissioned by VGCS/CIRMMT for the Research-Creation Project "Les Gestes", Montreal, Canada, March 2013.

- “Spin” by Marlon Schumacher (2012). For extended V-Drums, Interactive Video and Live-Electronics.

Commissioned by Codes D'Acces. Premiered at the "Prisma et Les Messagers" concert, Usine-C, Montreal, Canada, April 2012. - “Re Orso” by Marco Stroppa (? - 2011). Opera, Libretto by Catherine Ailloud-Nicolas and Giordano Ferrari after Arrigo Boito.

Commissioned by Opéra Comique, Théâtre Royal de la Monnaie, Ensemble InterContemporain, Françoise and Jean-Philippe Billarant, IRCAM-Centre Pompidou, Paris. Premiere: May 19, 2011. - “Ab-Tasten” by Marlon Schumacher (2011). For computer-controlled piano, 4 virtual pianos, and spatial sound synthesis.

Commissioned by CIRMMT. Premiered at the live@CIRMMT concert Clavisphere, Montreal, Canada, 2011.- Binaural Live-Recording McGill MultiMedia Room, April 14, 2011.

- “Cognitive Consonance” by Christopher Trapani (2010). For two plucked-string soloists, ensemble, and electronics.

Commissioned by IRCAM. Premiered at the Agora festival, Paris, France, 2010.- External Project Page with sound examples, score, etc.

- “Construction” by Richard Barrett (2005-2011). For three vocalists, ensemble, and electronics.

Commissioned by the Liverpool Capital of Culture, produced by HCMF, supported by Sound and Music and ELISION with the friendly support of Ernst von Siemens music foundation; also supported by British Council and SIAL/RMIT University (Australian Research Council). Premiered at the Huddersfield Contemporary Music Festival, UK, 2011.- HCMF page with interview, video-trailer, etc.